What You Should Takeaway from GitLab’s Database Deletion and Its Backups

Bulletproof Backups for Your WordPress Website

Fortify your business continuity with foolproof WordPress backups. No data loss, no downtime — just secure, seamless operation.

GitLab deleted the wrong database, but when ineffective backup solutions got added to the mix, the site’s system admins had to battle the perfect storm to get the site online. The takeaway from this situation? Choose your backup solutions carefully.

GitLab, the online tech hub, is facing issues as a result of an accidental database deletion that happened in the wee hours of last night. A tired, frustrated system administrator thought that deleting a database would solve the lag-related issues that had cropped up… only to discover too late that he’d executed the command for the wrong database.

What Went Wrong with GitLabs’ Backups

While the horror of the incident might have been mitigated by the fact that GitLab had not one but five backup methods in place, the problem was that all of them were discovered to be ineffective. Here’s a quick run-through of the different backup methods GitLab had, and what went wrong with each of them:

- The LVM snapshot backup wasn’t up-to-date– the last snapshot was manually created by the system admin 6 hours before the database deletion.

- The backup furnished on a staging environment was not functional– it automatically had the webhooks removed, and the replication process from this source wasn’t trustworthy since it was prone to errors.

- Their automatic backup solution was storing backups in an unknown location, and to top it, it seemed that older backups had been cleaned out.

- Backups stored on Azure were incomplete: they only had data from the NFS server but not from the DB server

- Another solution that was supposed to upload backups to Amazon S3 wasn’t working; so there were no backups in the bucket

As a result of these issues, the system admins are struggling to get the 6-hour old backup online. The progress of the data restoration has been closely followed by well-wishers, and many have appreciated the website’s transparency, especially under such duress.

How to Identify a Good Backup Solution

It’s certainly freaky that all the five backup solutions that GitLab had were ineffective, but this incident demonstrates that a number of things can go wrong with backups. The real aim for any backup solution, is to be able to restore data with ease… but simple oversights could render backup solutions useless. A backup software should be effective to handle all types of issues and provide enough security. This is why you should watch out for the following traits in any backup solution:

- Backup solutions should match your need

In the case of GitLabs, automatic backups were made once in every 24 hours. Considering the amount of data being added every minute, however, real-time backups would have been perfect for them. While not being the best in terms of data-conservation, the last manual backup was performed by the system admin 6 hours before the crash, and so was the most viable option. Choosing the right backup solution for your need requires the consideration of the frequency of data-addition, the levels of user activity, and the server load. - Backup solutions should allow easy, quick restoration

The problem with GitLab’s backups stored on its staging environment, was that the replication process was difficult to manage. When you’re already burdened with the responsibility of getting your site back up, you shouldn’t be worrying about the restoration process. - The backup solution should be completely independent of your site… in a known location

In the GitLab situation, the problem was not knowing the backup destination. This isn’t a problem with WordPress backup solutions,since they usually store backups on your site’s server… or on a personal storage account (such as Dropbox, Drive or Amazon S3). However, this means most of the time, they either require you to access your crashed site for backups… or they store the API key to these accounts on your site (which poses its own problems). Both these options present Catch-22 situations of ‘site is down so need backups, can’t access backups because site is down’. It’s important for you to know all there is to know about your backup destinations. - The backup solution should backup your entire site

Backups that only contain part of your site (such as GitLabs’ Azure backups) aren’t really reliable when your site goes down. In the case of WordPress backups, some solutions might backup your site except for custom tables (such as those installed by WooCommerce), so you need to be wary of such situations. - You should be able to easily test your backups

The real problem with all the backup solutions GitLabs had, was that they hadn’t previously tested them… and hence had to give them a hard second look after encountering restoration-related problems. The real concern is that their backups weren’t discovered to be inefficient until they actually needed them. This is why testing backups should be a part of your backup strategy.

We’re all human at the end of the day, and the job of a systems admin, especially when overloaded with spam, can never be taken lightly. This is why backups exist– to have an easy ‘undo’ in case there ever is an error, and your site goes down, or data is lost.

We can only hope that things go well for the GitLab team, as they rush to get their data back.

GitLab’s status can be monitored via this Twitter feed. (When this article was published, 73% of the database copy had been made).

Tags:

Share it:

You may also like

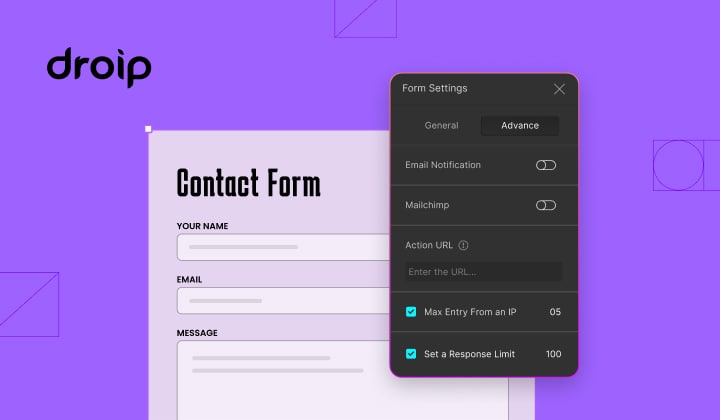

How to Limit Form Submissions with Droip in WordPress

Forms are an indispensable part of any website because of their versatility, letting you collect information for various purposes! However, people with ill intentions often attempt to exploit these forms…

How To Manage Multiple WordPress sites

Management tools help agencies become well-oiled machines. Each task is completed with the least amount of effort and highest rate of accuracy. For people managing multiple WordPress sites, the daily…

PHP 8.3 Support Added to Staging Feature

We’ve introduced PHP version 8.3 to our staging sites. Test out new features, code changes, and updates on the latest PHP version without affecting your live website. Update PHP confidently…

How do you update and backup your website?

Creating Backup and Updating website can be time consuming and error-prone. BlogVault will save you hours everyday while providing you complete peace of mind.

Updating Everything Manually?

But it’s too time consuming, complicated and stops you from achieving your full potential. You don’t want to put your business at risk with inefficient management.

Backup Your WordPress Site

Install the plugin on your website, let it sync and you’re done. Get automated, scheduled backups for your critical site data, and make sure your website never experiences downtime again.